In a previous post, Video File Transcoding with Open Source Cloud, we discussed how to set up a fully-fledged video transcoding pipeline using SVT Encore. In this follow-up, we will walk through the process of recreating that setup using Terraform, an open-source infrastructure-as-code (IaC) tool from HashiCorp, or its open-source alternative, OpenTofu.

Terraform enables you to automate the creation, management, and maintenance of infrastructure by defining it in code. By using HCL (HashiCorp Configuration Language), you can declaratively describe resources like servers, databases, and networks, and Terraform will handle their provisioning across a variety of platforms.

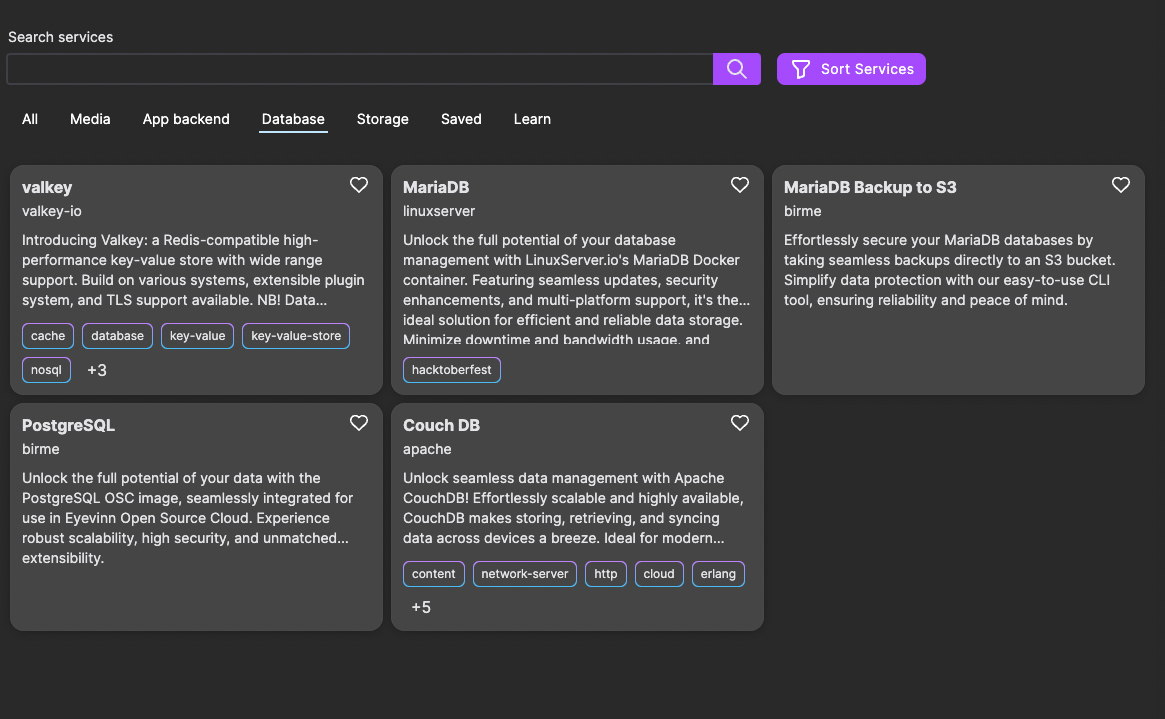

OSC Terraform Provider

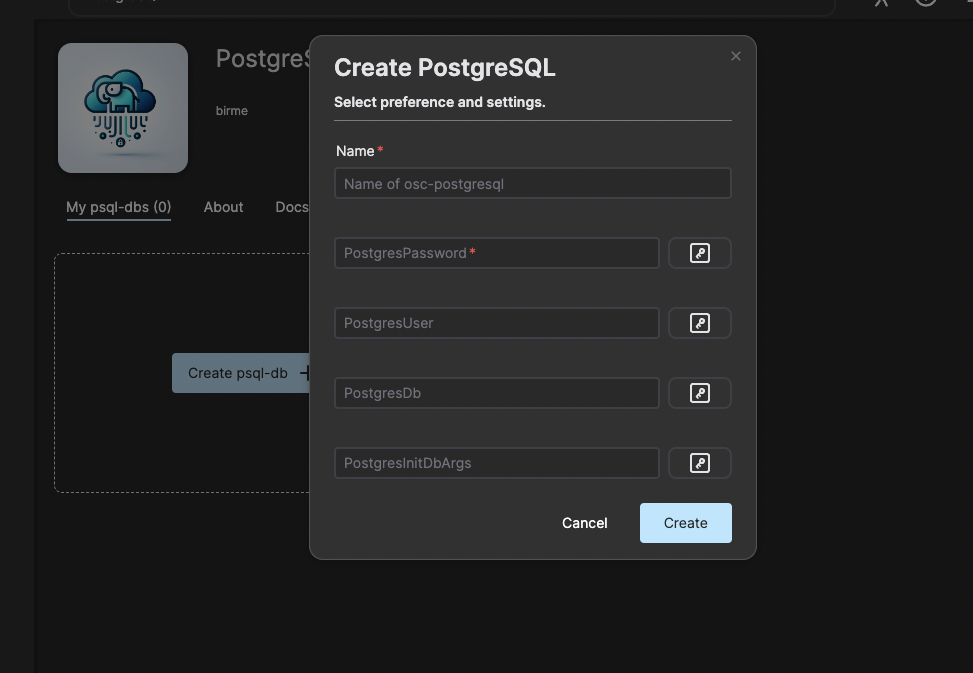

To simplify interaction with Open Source Cloud (OSC), we’ve created a Terraform provider. This allows you to easily spin up or tear down OSC resources directly through Terraform.

In this post, we’ll define a Terraform configuration file to describe the video file transcoding pipeline.

Prerequisites

- An OSC Account

- Terraform installed

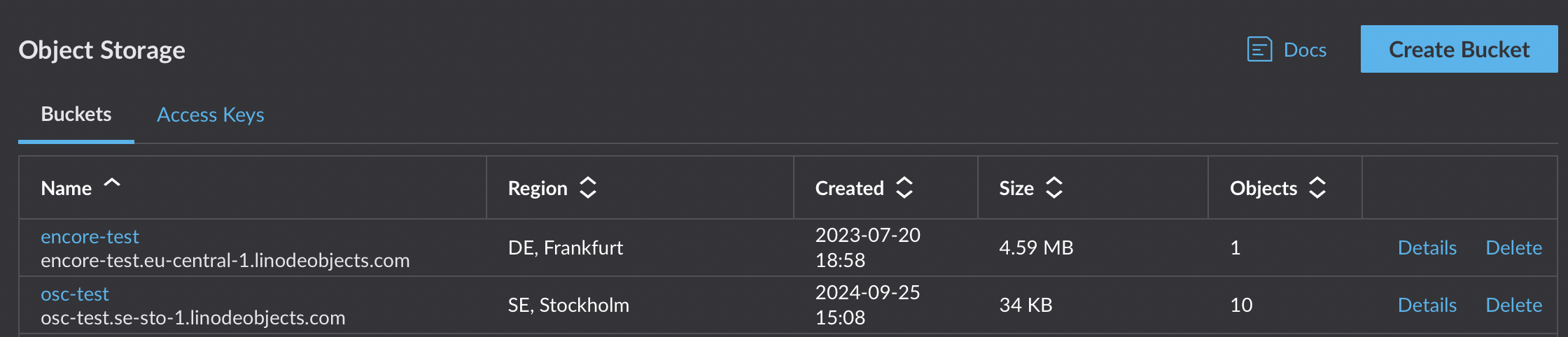

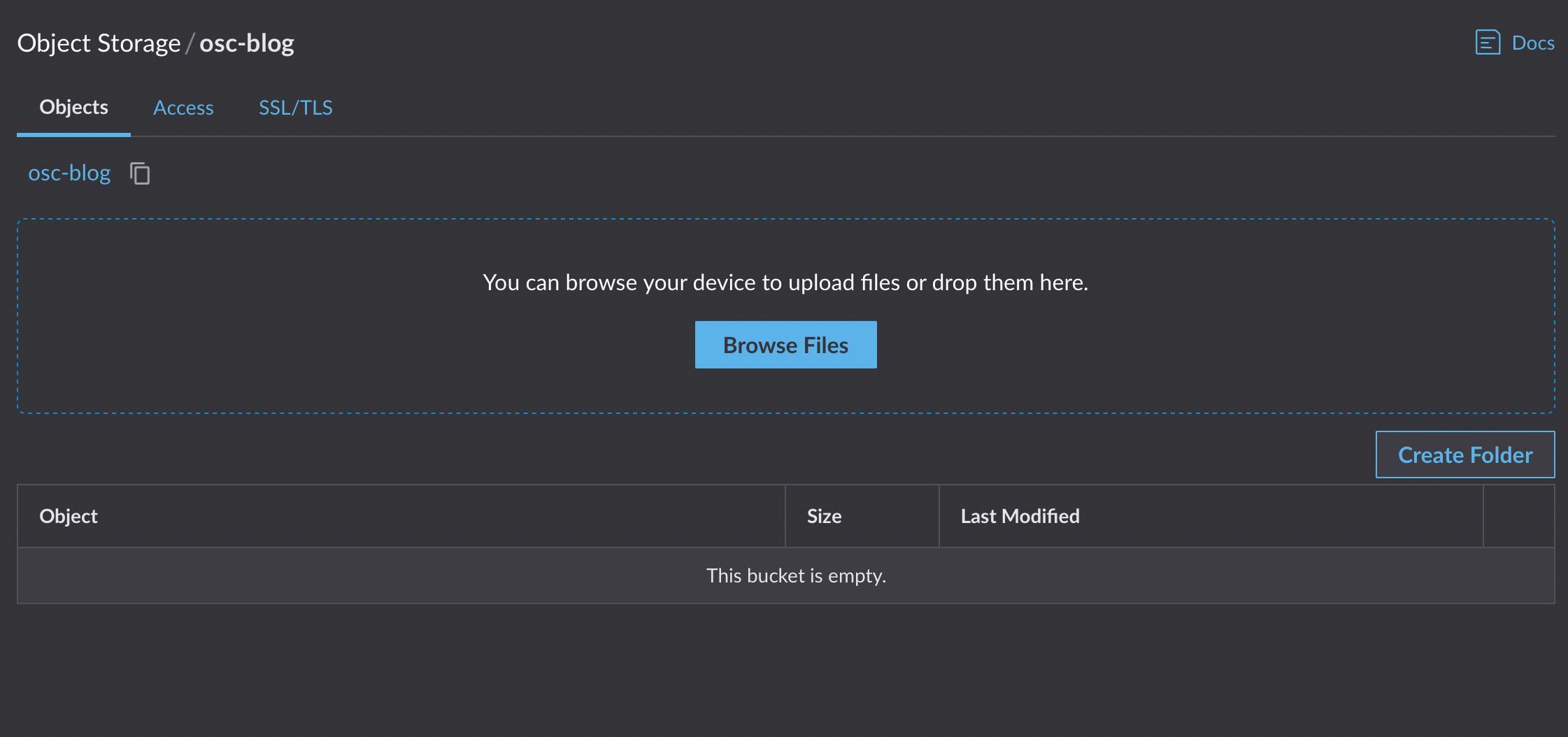

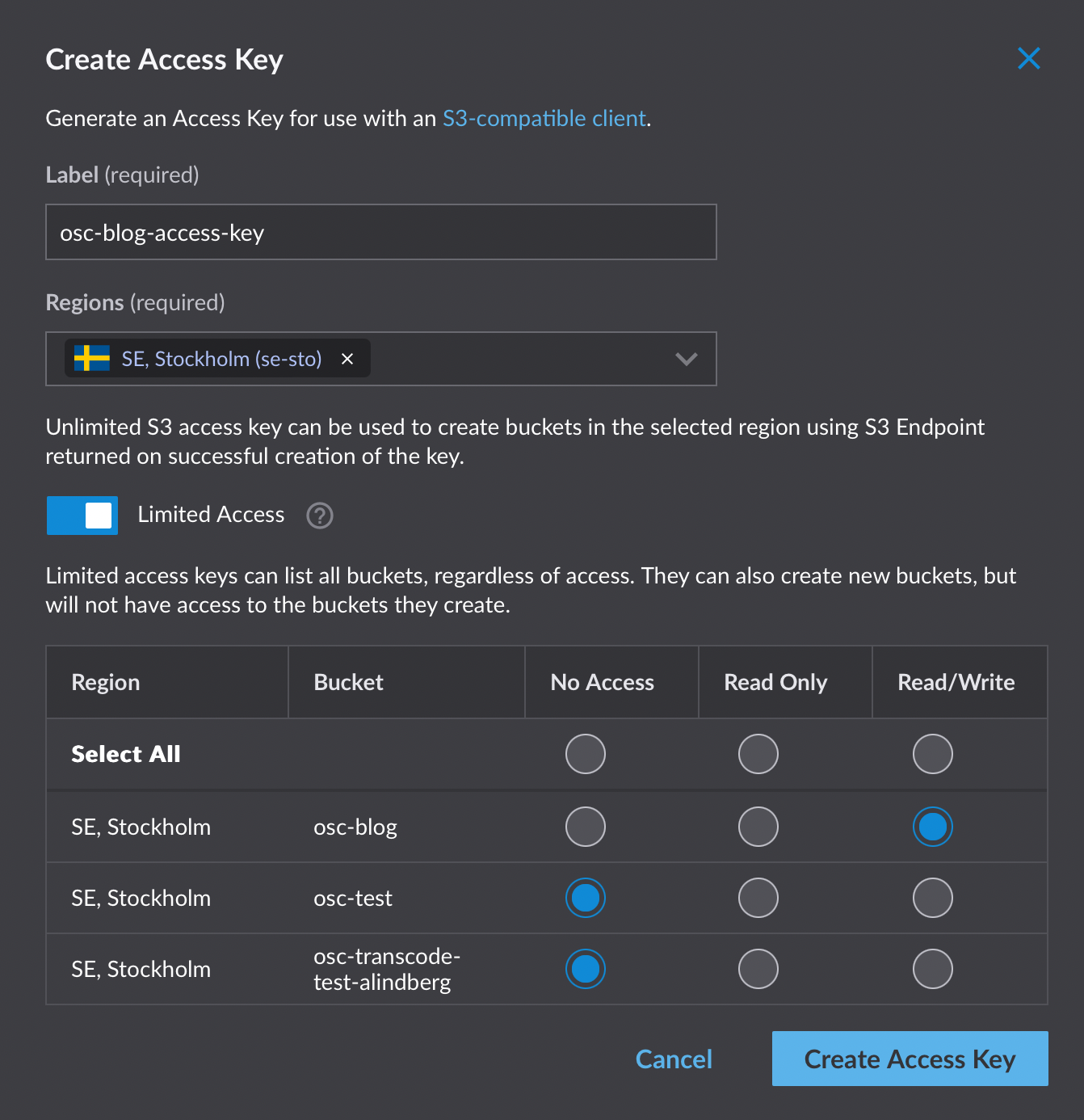

- An S3 bucket

The Terraform Configuration

Create a new Terraform file named main.tf. We’ll begin by defining the required provider for OSC.

terraform {

required_providers {

osc = {

source = "EyevinnOSC/osc"

version = "0.1.3"

}

}

}

provider "osc" {

pat = <PERSONAL_ACCESS_TOKEN>

environment = "prod"

}Using Variables for Credentials

To avoid accidentally exposing sensitive credentials, we’ll use a variable for the Personal Access Token (PAT). This approach makes it easier to manage credentials securely. We also define the OSC environment as a variable to easily switch between devand prod.

variable "osc_pat" {

type = string

}

variable "osc_env" {

value = string

environment = "dev"You can then reference this variable in the provider block like so:

provider "osc" {

pat = var.osc_pat

environment = var.osc_env

}Setting the Token

To set the osc_pat variable, you can either pass it via the command line using the -var flag or set it as an environment variable:

export TF_VAR_osc_pat=<PERSONAL_ACCESS_TOKEN>Define the SVT Encore Resource

The first step in the transcoding pipeline is setting up an SVT Encore instance. This resource requires a name and, optionally, a profiles_url where transcoding profiles are stored.

Define the osc_encore_instance resource like this:

resource "osc_encore_instance" "example" {

name = "ggexample"

profiles_url = "https://raw.githubusercontent.com/Eyevinn/encore-test-profiles/refs/heads/main/profiles.yml"

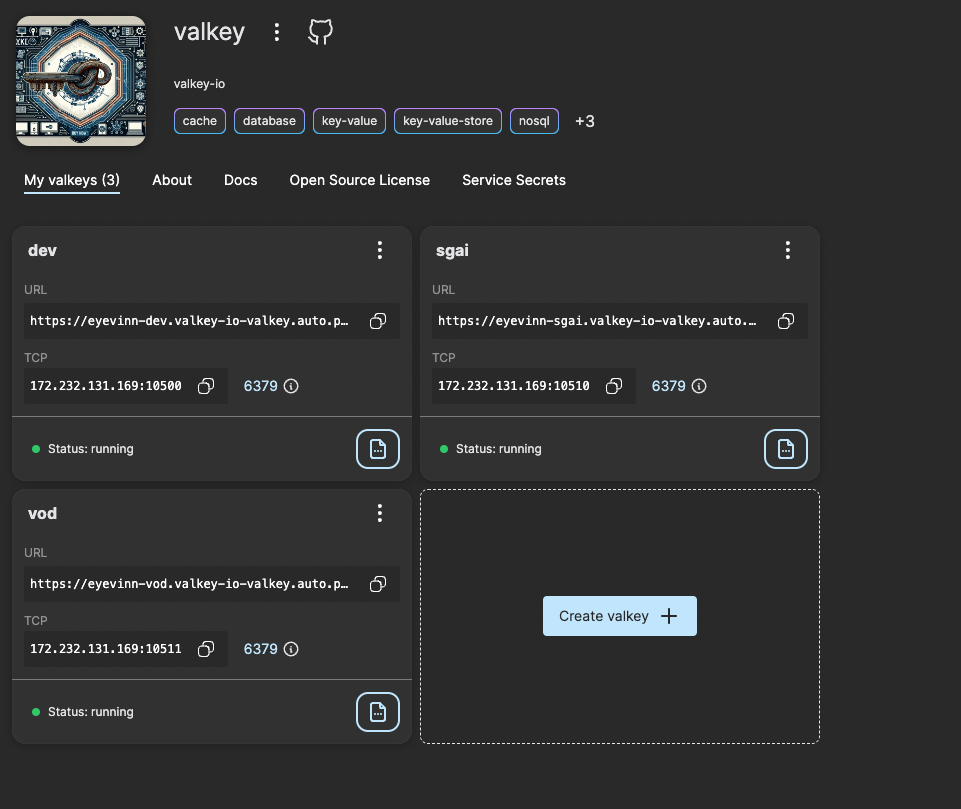

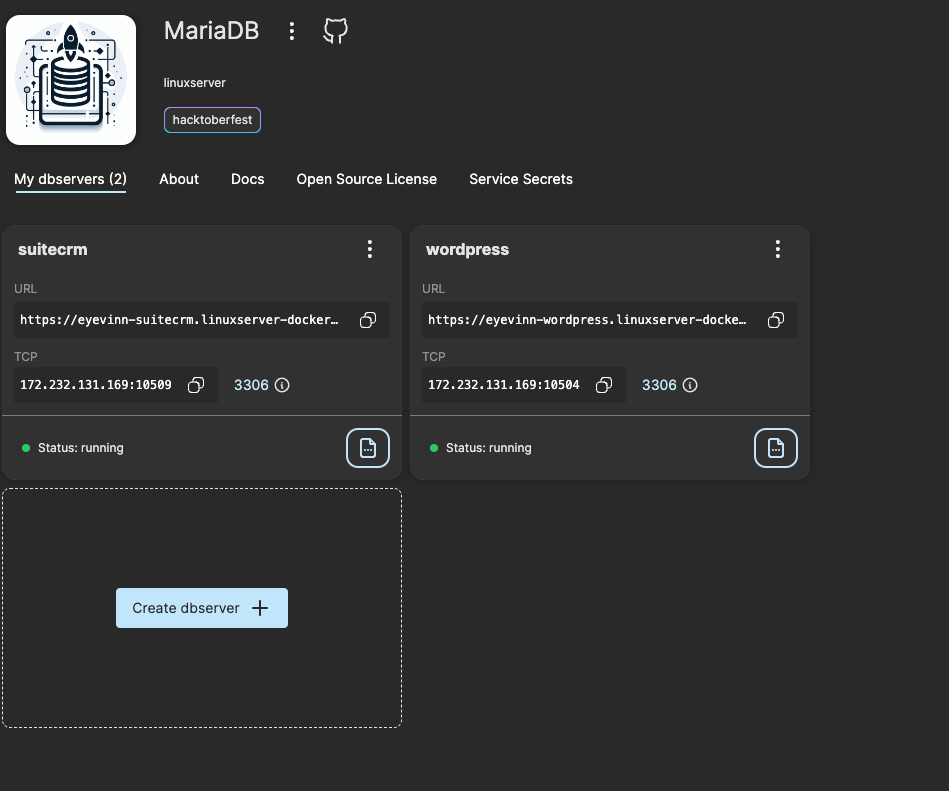

}Define the Valkey Instance

Next, we define a Valkey instance, which is a required component in the pipeline.

resource "osc_valkey_instance" "example" {

name = "ggexample"

}Define the Callback Listener

The Encore Callback Listener connects to both the Valkey and Encore instances. It requires the redis_url and encore_url, which are derived from the earlier resources.

The redis_url should be in the Redis-compatible format, and the encore_url should be formatted without a trailing slash:

resource "osc_encore_callback_instance" "example" {

name = "ggexample"

redis_url = format("redis://%s:%s", osc_valkey_instance.example.external_ip, osc_valkey_instance.example.external_port)

encore_url = trimsuffix(osc_encore_instance.example.url, "/")

redis_queue = "transfer"

}Define the Retransfer Service

We also need to set up AWS secrets for the Retransfer service. To manage this securely, we’ll use variables for the AWS access key and secret, and local variables to store the names of the credentials.

variable "aws_keyid" {

type = string

}

variable "aws_secret" {

type = string

}

variable "aws_output" {

type = string

default = "s3://path/to/bucket"

}

resource "osc_secret" "keyid" {

service_ids = ["eyevinn-docker-retransfer"]

secret_name = "awsaccesskeyid"

secret_value = var.aws_keyid

}

resource "osc_secret" "secret" {

service_ids = ["eyevinn-docker-retransfer"]

secret_name = "awssecretaccesskey"

secret_value = var.aws_secret

}Define the Encore Transfer Service

Finally, we define the Encore Transfer service, which will manage the transfer of transcoded media files to the specified AWS S3 bucket.

resource "osc_encore_transfer_instance" "example" {

name = "ggexample"

redis_url = osc_encore_callback_instance.example.redis_url

redis_queue = osc_encore_callback_instance.example.redis_queue

output = var.aws_output

aws_keyid = osc_secret.keyid.secret_name

aws_secret = osc_secret.secret.secret_name

osc_token = var.osc_pat

}Define Outputs

To easily access dynamic endpoints, we can define outputs in Terraform. These outputs can be used in scripts or other automation tasks.

output "encore_url" {

value = trimsuffix(osc_encore_instance.example.url, "/")

}

}

output "encore_name" {

value = osc_encore_instance.example.name

}

output "callback_url" {

value = trimsuffix(osc_encore_callback_instance.example.url, "/")

}Full Configuration File (main.tf)

Here’s the complete main.tf file:

terraform {

required_providers {

osc = {

source = "EyevinnOSC/osc"

version = "0.1.3"

}

}

}

variable "osc_pat" {

type = string

sensitive = true

}

variable "osc_environment" {

type = string

default = "prod"

}

variable "aws_keyid" {

type = string

sensitive = true

}

variable "aws_secret" {

type = string

sensitive = true

}

variable "aws_output" {

type = string

}

provider "osc" {

pat = var.osc_pat

environment = var.osc_environment

}

resource "osc_encore_instance" "example" {

name = "ggexample"

profiles_url = "https://raw.githubusercontent.com/Eyevinn/encore-test-profiles/refs/heads/main/profiles.yml"

}

resource "osc_valkey_instance" "example" {

name = "ggexample"

}

resource "osc_encore_callback_instance" "example" {

name = "ggexample"

redis_url = format("redis://%s:%s", osc_valkey_instance.example.external_ip, osc_valkey_instance.example.external_port)

encore_url = trimsuffix(osc_encore_instance.example.url, "/")

redis_queue = "transfer"

}

resource "osc_secret" "keyid" {

service_ids = ["eyevinn-docker-retransfer"]

secret_name = "awsaccesskeyid"

secret_value = var.aws_keyid

}

resource "osc_secret" "secret" {

service_ids = ["eyevinn-docker-retransfer"]

secret_name = "awssecretaccesskey"

secret_value = var.aws_secret

}

resource "osc_encore_transfer_instance" "example" {

name = "ggexample"

redis_url = osc_encore_callback_instance.example.redis_url

redis_queue = osc_encore_callback_instance.example.redis_queue

output = var.aws_output

aws_keyid = osc_secret.keyid.secret_name

aws_secret = osc_secret.secret.secret_name

osc_token = var.osc_pat

}

output "encore_url" {

value = trimsuffix(osc_encore_instance.example.url, "/")

}

output "encore_name" {

value = osc_encore_instance.example.name

}

output "callback_url" {

value = trimsuffix(osc_encore_callback_instance.example.url, "/")

}Running the Pipeline

Before running Terraform, ensure your environment variables are set:

export TF_VAR_osc_pat=<PERSONAL_ACCESS_TOKEN>

export TF_VAR_aws_keyid=<AWS_KEYID>

export TF_VAR_aws_secret=<AWS_SECRET>Once the environment variables are configured, you can run the pipeline with:

terraform init

terraform applyFollow the prompts to create the infrastructure. Once complete, the instances should be successfully provisioned.

Viewing Outputs

To view the outputs from Terraform, run:

terraform outputExample output:

callback_url = "https://eyevinnlab-ggexample.eyevinn-encore-callback-listener.auto.prod.osaas.io"

encore_url = "https://eyevinnlab-ggexample.encore.prod.osaas.io"

encore_name = "ggexample"To view a specific output, such as the encore_url:

terraform output encore_url

"https://eyevinnlab-ggexample.encore.prod.osaas.io"Encode Job

To initiate a transcoding job, you can either use the Swagger UI, as described in the previous post, or run the script provided below. The script accepts the URL of the media you want to encode as an input argument.

encoreJob.sh

#!/bin/bash

# Ensure MEDIA_URL argument is provided

if [ -z "$1" ]; then

echo "Usage: $0 <MEDIA_URL>"

exit 1

fi

# Assign the first argument to MEDIA_URL

MEDIA_URL="$1"

# Retrieve values from Terraform output

ENCORE_URL=$(terraform output -raw encore_url)

EXTERNAL_ID=$(terraform output -raw encore_name)

EXTERNAL_BASENAME=$(terraform output -raw encore_name)

CALLBACK_URL=$(terraform output -raw callback_url)

OSC_PAT=$TF_VAR_osc_pat

OSC_ENV=$TF_VAR_osc_env

# Validate required variables

if [ -z "$ENCORE_URL" ]; then

echo "Error: Terraform output 'encore_url' is missing."

exit 1

fi

if [ -z "$EXTERNAL_ID" ]; then

echo "Error: Terraform output 'encore_name' is missing."

exit 1

fi

if [ -z "$EXTERNAL_BASENAME" ]; then

echo "Error: Terraform output 'encore_name' is missing."

exit 1

fi

if [ -z "$CALLBACK_URL" ]; then

echo "Error: Terraform output 'callback_url' is missing."

exit 1

fi

if [ -z "$OSC_PAT" ]; then

echo "Error: Environment variable 'OSC_PAT' (TF_VAR_osc_pat) is not set."

exit 1

fi

if [ -z "$OSC_ENV" ]; then

echo "Error: Environment variable 'OSC_ENV' (TF_VAR_osc_env) is not set."

exit 1

fi

TOKEN_URL="https://token.svc.$OSC_ENV.osaas.io/servicetoken"

ENCORE_TOKEN=$(curl -X 'POST' \

$TOKEN_URL \

-H 'Content-Type: application/json' \

-H "x-pat-jwt: Bearer $OSC_PAT" \

-d '{"serviceId": "encore"}' | jq -r '.token')

curl -X 'POST' \

"$ENCORE_URL/encoreJobs" \

-H "x-jwt: Bearer $ENCORE_TOKEN" \

-H 'accept: application/hal+json' \

-H 'Content-Type: application/json' \

-d '{

"externalId": "'"$EXTERNAL_ID"'",

"profile": "program",

"outputFolder": "/usercontent/",

"baseName": "'"$EXTERNAL_BASENAME"'",

"progressCallbackUri": "'"$CALLBACK_URL/encoreCallback"'",

"inputs": [

{

"uri": "'"$MEDIA_URL"'",

"seekTo": 0,

"copyTs": true,

"type": "AudioVideo"

}

]

}'Example Usage

To run the script with an example media URL, use the following command:

./encoreJob.sh http://commondatastorage.googleapis.com/gtv-videos-bucket/sample/WeAreGoingOnBullrun.mp4This script will trigger a transcoding job for the specified media file and send progress updates to the provided callback URL. Make sure that all Terraform outputs are correctly set before running the script.

When a job has been submitted and if you want to see the progress you can go to the Encore Callback Listener service and open the instance logs to check that it is receiving the callbacks.

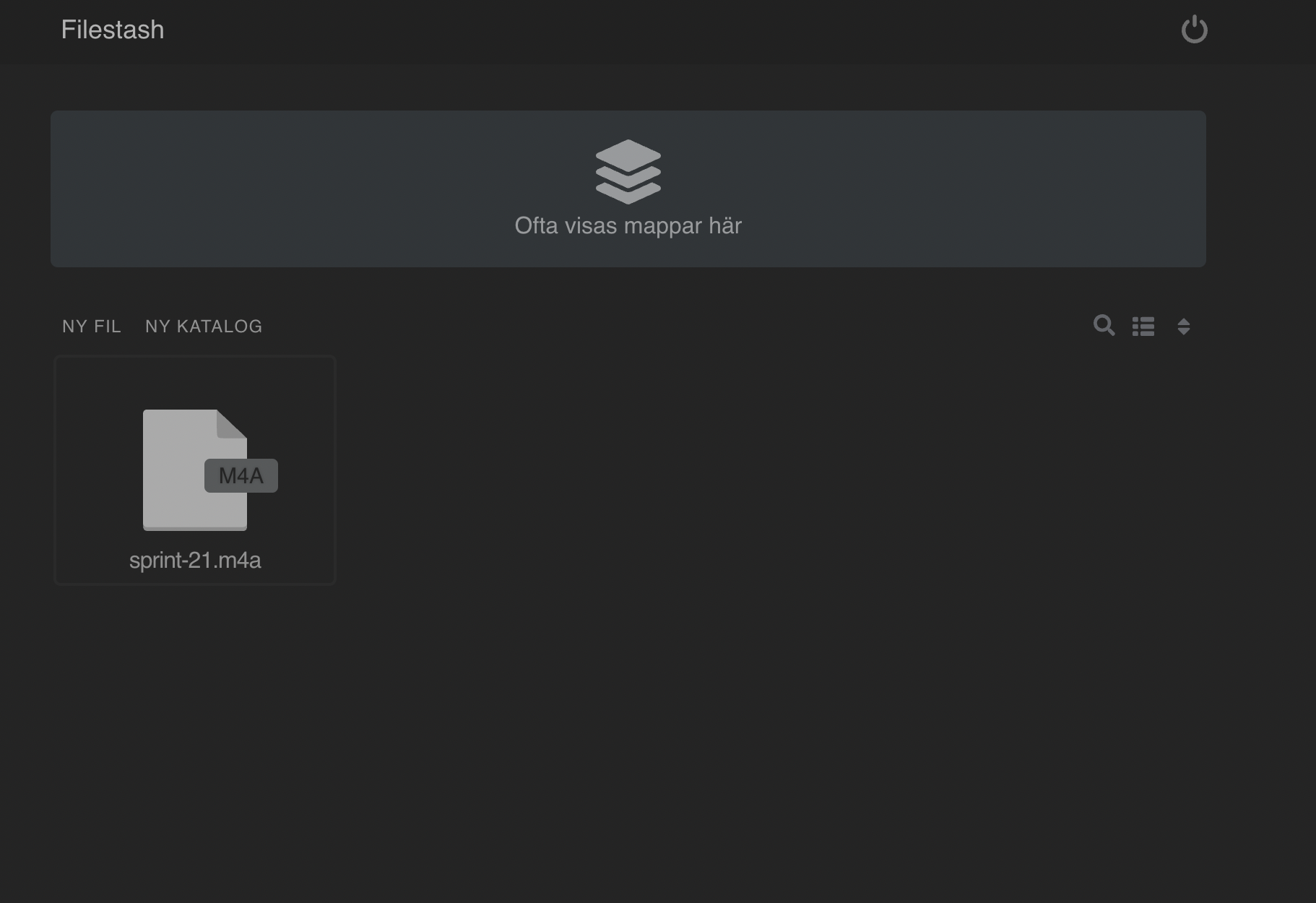

When the transcoding process is completed it will place a job on the transfer queue that will be picked up by the Encore Transfer service. And when all the transfer jobs are completed you will in this example find a set of files in your output bucket where you have set of different variants with different resolutions and bitrates.

Destroy

When the transcoding is finished and no more jobs are required we can take down the instances running with the simple command:

terraform destroyConclusion

You now have a fully-fledged video transcoding pipeline for preparing video files for streaming using SVT Encore, along with supporting services. The entire setup is based on open-source software, and you don’t need to set up your own infrastructure to get started. Additionally, the pipeline leverages Terraform for managing and deploying the infrastructure, making it easy to get up and running. Should you later choose to host everything yourself, you’re free to do so, as all the code and resources demonstrated here are available as open source.